DeepSeek’s Standard API tier is designed to cover the default use case for most developers. It offers a reliable, pay per token endpoint that can handle everyday summarization, classification, and reasoning tasks without over engineering the pricing. In 2026, it is positioned between the ultra cheap V4 Flash and the more powerful V4 Pro, trying to balance cost, latency, and quality for mainstream SaaS, support tools, and content systems.

Before we explore this specific tier in detail, you might want to join a group of peers who are actively using these tools. You can connect with us right here.

What Exactly Is DeepSeek API Standard

The Standard API is DeepSeek’s mainline endpoint for general purpose text models. It typically exposes a family of models (for example, deepseek standard or similar identifiers) that are tuned for a mix of speed and accuracy. Developers can call it via the usual REST or HTTP interface, send prompts, receive JSON formatted responses, and track usage through a dashboard.

This tier is intended for teams that:

-

Need predictable, stable pricing without experimental or frontier model volatility.

-

Do not need the absolute highest benchmarks but care about cost efficiency.

-

Are building products with mixed use cases such as chat, classification, summarization, and light code generation.

In other words, if your product is neither a high stakes, cutting edge research pipeline nor a super low budget, latency sensitive microservice, the Standard API is often the sweet spot choice. This matches what we know about artificial intelligence in SEO what you need to know today because finding a balanced tool can save both time and resources.

Key Features and Developer Experience

-

Pricing model: Typically pay per token, with a clear list of per 1M token prices for input and output, sitting above V4 Flash but below V4 Pro.

-

Context length: Often 64K to 128K tokens, enough for long documents, multi message chats, and moderately complex RAG workflows.

-

Latency: Designed for good rather than blazing fast latency; suitable for synchronous UIs that can tolerate 0.5 to 2 second responses, or batch jobs that do not mind some delay.

-

JSON mode: Support for structured output JSON mode in many Standard model variants, making it easy to build pipelines that consume machine readable outputs.

-

Function calling and tools: Often supports a moderate number of tools (around 20 to 30), sufficient for common agent style workflows without going all in on large scale tooling.

-

Environment and observability: Usage dashboards, per endpoint metrics, and simple rate limiting rules make it easy to monitor costs and performance.

From a developer experience point of view, DeepSeek Standard roughly maps to the comfortable default tier you might choose for a general purpose text only workhorse.

If you are setting up these workflows and need help optimizing your configurations, we have multiple setup guides and shared experiences in our community. You can find those resources and ask questions in our Discord.

How Standard Compares to Flash and Pro

| Aspect | Flash (cheapest) | Standard (default) | Pro (frontier) |

| Price per 1M input tokens | Lowest (around $0.14) | Mid tier | Highest (around $0.42) |

| Quality level | Good for most tasks | Balanced, reliable | Highest |

| Target workloads | High volume, cost sensitive | Mixed use SaaS, support, content | High quality research, complex reasoning |

| Typical latency | Fast | Moderate | Moderate to slow due to complexity |

| Token context | 128K | 64K to 128K | 128K |

| Use case fit | Bulk, low cost tasks | Everything else | Premium workloads |

In practice, teams that want to keep costs low but still need decent quality and developer friendly behavior will often:

-

Use Flash for high volume, low risk tasks (for example, bulk summarization, simple classification).

-

Use Standard for general purpose chat, customer support tasks, content pipelines, and moderate code related work.

-

Use Pro (or Vision) only for tasks where benchmark quality and complex reasoning matter more than price.

When exploring options like these, understanding the positive impact of AI search engine optimization explained shows how selecting the right balance of intelligence and cost can drive excellent software development results.

When to Choose DeepSeek API Standard

The Standard tier is a natural fit when:

-

Your app or product has a mix of conversational, summarization, and classification needs rather than a single, very specialized use case.

-

You want to avoid the cheapest possible tier (Flash) for anything that might be user facing or critical, because you care about consistent quality.

-

You do not need frontier level reasoning (therefore Pro feels overkill).

-

Your team prefers a single, stable tier to standardize on, rather than juggling multiple models and pricing plans.

-

You are comfortable with Chinese hosted infrastructure, since DeepSeek’s API endpoints are based there.

For many mid sized products, starting with the Standard API and only adding Flash or Pro for specific workloads later is a sensible strategy.

Practical Examples and Patterns

-

Customer support chatbot: Route 70% of queries to Standard, 20% to Flash for quick answers, 10% to Pro when the conversation gets complex.

-

Content moderation and classification: Use Standard for most text classification tasks, then move only the most critical or edge case data into Pro if needed.

-

Document processing pipeline: Preprocess with Flash for metadata extraction, then run summaries and highlights with Standard for better readability.

-

General purpose SaaS features: “Rewrite this,” “summarize,” “suggest next steps,” and similar features can often live comfortably on the Standard tier without breaking the budget.

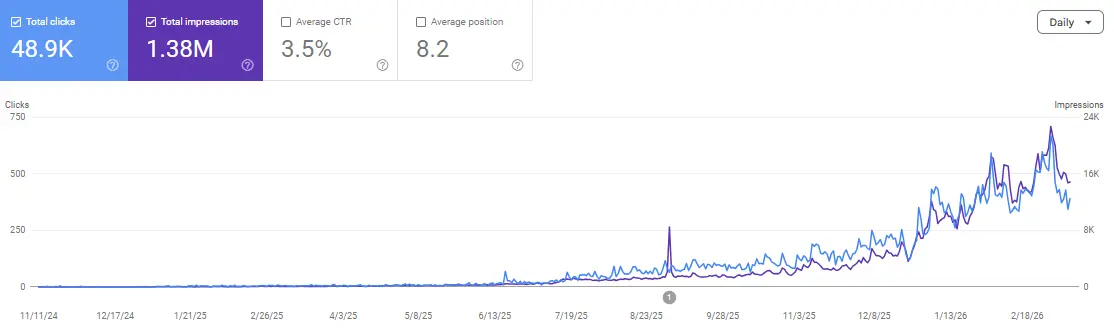

In these cases, the Standard API gives enough headroom in quality and latency while avoiding the complexity of managing many different pricing tiers. Seeing how SEO AI tools can transform your website growth can help you visualize how layered digital infrastructure functions in practice.

Cost and Governance Advice

Because DeepSeek’s Standard API is priced in the mid range, it is important to:

-

Monitor usage by endpoint and by user or team, so you can catch runaway costs early.

-

Set reasonable rate limits and soft quotas, especially for interactive features.

-

Use JSON mode and structured outputs wherever possible to reduce validation overhead and downstream errors.

-

Consider a mixed tier strategy: keep Standard as your main lane, then offload bulk, low priority tasks to Flash and highest quality tasks to Pro.

These practices keep costs under control while still allowing you to take advantage of DeepSeek’s lower cost infrastructure.

FAQs

How does Standard pricing compare to GPT 4o?

Standard is usually significantly cheaper than GPT 4o, especially for high volume workloads, though not quite as cheap as Flash.

Is there a free tier for Standard?

Often, DeepSeek offers limited use free credits or trials that let you test Standard behavior before going all in.

What is the latency like for a typical request?

For 1 to 2K tokens of input, you can expect 0.5 to 2 seconds depending on model load and complexity.

Can I mix Standard with other DeepSeek tiers in the same app?

Yes, many teams run multiple tiers (Flash, Standard, Pro, Vision) in parallel, directing traffic based on use case.

Are there region specific endpoints or data residency?

As of 2026, DeepSeek infrastructure is generally China based; data residency guarantees may be more limited than with some Western providers.

Is there good SDK support for Standard?

Yes, DeepSeek SDKs (Node, Python, etc.) support the Standard models, often with identical interfaces to Flash and Pro.

Final Thoughts

DeepSeek API Standard is the sensible default tier for most developers who want a good balance of price, quality, and latency without over engineering their stack. For teams building general purpose SaaS products, support tools, or content pipelines, it often ends up being the workhorse tier, with Flash and Pro used only for specific, high volume or high quality use cases.

If you are unsure where to start in your architecture, a safe pattern is to begin with Standard, then add Flash and Pro only where the benchmarks or your product requirements clearly justify the extra cost.

Join the discussion and scale your authority: Scale Xpert Discord Community