The landscape of earned media has shifted significantly over the last few years. Journalists at Tier-1 publications are no longer satisfied with “expert commentary” that merely echoes common knowledge. They are under increasing pressure to produce original, high-impact stories that drive engagement. For those of us in the SEO and engineering space, this creates a unique opportunity. By applying data mining techniques, we can move beyond the “pitch and pray” model and enter the realm of irrefutable Digital PR.

Before we get into the technical architecture of a data-driven campaign, it is worth noting that we often discuss these advanced strategies in our private circles. If you’re looking for a peer group that values data over fluff, you might find our community discussions quite useful. You can join us and other technical SEOs right here.

The Engineering Mindset in Modern PR

In traditional outreach, the goal is often to find a “hook.” In technical Digital PR, the goal is to discover a “truth” hidden within a dataset. This shift requires an engineering mindset viewing the internet not just as a collection of pages, but as a massive, unstructured database. When you understand what is Digital PR through this lens, it becomes a process of information retrieval and synthesis rather than just creative writing.

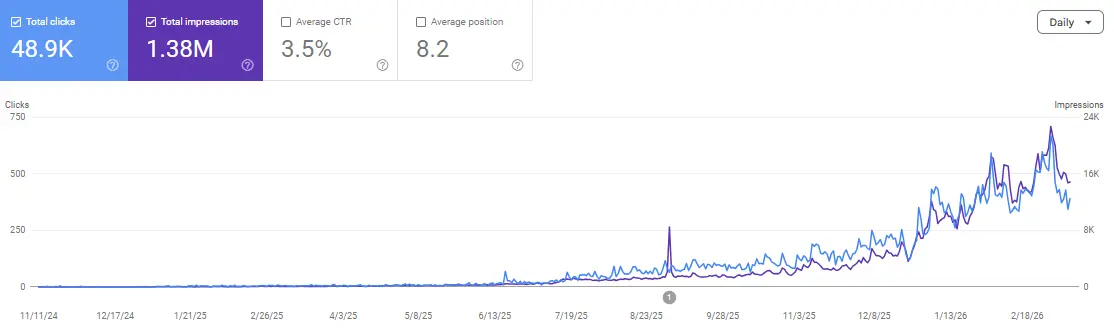

The primary hurdle for most SEOs is the “Information Gain” factor. Google’s patents and recent algorithm updates appear to suggest that content providing new, non-redundant information is prioritized. Data mining is the most efficient way to generate this gain. By extracting, cleaning, and analyzing raw data, you create a “linkable asset” that is fundamentally impossible for a competitor to replicate without performing the same heavy lifting.

Building the Technical Stack for Extraction

To execute a campaign that stands up to editorial scrutiny, your technical stack must be capable of handling large-scale extraction without triggering anti-bot mechanisms or hitting HTTP 429 (Too Many Requests) errors.

1. Python and the Scrapy Framework

While BeautifulSoup is excellent for small-scale scripts, a full-scale PR campaign often requires Scrapy. Its asynchronous architecture allows for significantly higher throughput. When scraping niche forums like Reddit or specialized industry boards, you’ll likely need to implement middleware to handle rotating proxies and user-agent spoofing.

2. Public API Aggregation

Sometimes, the best data isn’t scraped; it’s aggregated. Many government bodies and international organizations provide REST APIs. The challenge here isn’t getting the data—it’s normalizing it. If you are pulling economic indicators from the World Bank API and trying to correlate them with consumer sentiment data from a private API, your “Join” logic must be flawless to maintain the “irrefutable” status of your claims.

3. SQL vs. NoSQL for Storage

For campaigns involving millions of data points (e.g., analyzing every price change on an e-commerce platform over six months), a relational database like PostgreSQL is often preferred for its strict schema and complex joining capabilities. However, if you are capturing unstructured social media mentions, a NoSQL solution like MongoDB may suggest better flexibility during the initial exploration phase.

From Raw Data to “Linkable” Assets

Once the data is in your warehouse, the real work begins. Raw data is boring; insights are what journalists buy. This is the core of how to create linkable content that attracts backlinks naturally. You aren’t just presenting a table; you are presenting a discovery.

For example, a standard “Top 10 Gadgets” list is forgettable. But a data-mined report showing a “24% Correlation Between Firmware Update Frequency and Resale Value” is a headline. To achieve this, you should utilize the Pandas library in Python for exploratory data analysis (EDA). Use it to identify outliers, calculate standard deviations, and ensure your sample size (N) is statistically significant. If your N is too low, a savvy journalist will spot the weakness in your methodology immediately.

If you are currently struggling with the data cleaning phase or finding it hard to normalize datasets from different sources, we have shared some boilerplate Python scripts and SQL queries within our community to help speed up the process. You can find those resources and ask questions in our Discord.

Normalization and Statistical Integrity

A common mistake in data-driven PR is ignoring “Selection Bias” or failing to account for “Confounding Variables.” If you claim that “City A is the most tech-obsessed because it has the most Wi-Fi hotspots,” but fail to normalize for population density, your data is refutable.

-

Z-Score Normalization: Useful when comparing data points across different scales (e.g., comparing Twitter engagement to LinkedIn shares).

-

Moving Averages: Vital for smoothing out “noise” in time-series data to reveal the actual trend.

-

Sentiment Analysis: Using libraries like

NLTKorTextBlobto assign a polarity score to unstructured text data.

By ensuring your methodology is sound, you are essentially performing original data research. This is one of the most effective link building tactics for SEO because it positions your brand as an authority. Journalists will link to you not as a favor, but as a necessary citation for the data you’ve provided.

Visualizing for the “Zero-Click” Era

We are moving into an era where “Zero-Click” searches are becoming dominant. To counter this, your data visualization should be designed to be both “snackable” for social media and “deep” for editorial use.

Consider building a small Next.js application to host your data. Instead of a static infographic, an interactive chart (using D3.js or Chart.js) allows a journalist to find a local angle for their specific audience. If a reporter in Chicago can filter your national data to see only Chicago-based stats, they are significantly more likely to cover the story and provide a high-authority backlink.

This level of technical depth is what separates a standard SEO from a technical authority. It builds domain authority that is “sticky” meaning it is built on foundational trust rather than temporary trends.

FAQs

1. How do I avoid getting banned while scraping data for a PR campaign?

The possibility of getting banned is high if you don’t respect robots.txt or crawl too aggressively. Use headless browsers like Playwright with a randomized delay between requests. Implementing a “Backoff” strategy when you encounter 429 errors is essential for maintaining the health of your scraping infrastructure.

2. Is a sample size of 1,000 enough for a “National” report?

It depends on the population and the margin of error you are willing to accept. For most Digital PR campaigns, a sample size of 1,000–2,000 is often considered the industry standard for consumer surveys. However, if you are mining technical data (like log files), you should aim for significantly higher numbers to ensure statistical significance.

3. Which Python library is best for sentiment analysis in 2026?

While VADER is still excellent for social media text due to its handling of emojis and slang, transformer-based models (like those available through Hugging Face) appear to provide much higher accuracy for long-form editorial content or forum discussions.

4. How do I present a “Methodology” section without boring the journalist?

Include a “TL;DR” version of your methodology at the top of your report (e.g., “We analyzed 4.2 million data points over 6 months”). Put the heavy technical details—the “how”—at the bottom of the page or in a downloadable PDF. This satisfies both the casual reader and the skeptical data journalist.

5. Can I use AI to generate the data?

No. This is a critical distinction. You can use AI to analyze or categorize existing data, but “hallucinated” data will destroy your brand’s credibility instantly. Irrefutable PR relies on hard, verifiable facts derived from real-world sources.

6. What is the best way to host interactive charts for SEO?

Ensure the charts are rendered server-side (SSR) or have a static fallback. If the data is only visible via client-side JavaScript, search engine crawlers might struggle to index the “Information Gain” you’ve worked so hard to create.

Conclusion

The intersection of data mining and Digital PR is where the most significant SEO gains are currently being made. By moving away from subjective storytelling and toward technical evidence, you create content that earns its place in the SERPs. It’s about building assets that jounalists feel compelled to cite.

If you’re ready to start building your first data-driven asset and want to bounce your methodology off other engineers, or if you need help debugging a particularly stubborn scraper, come say hello. We discuss these technical hurdles every day.

Join the discussion and scale your authority ScaleXpert Discord Community