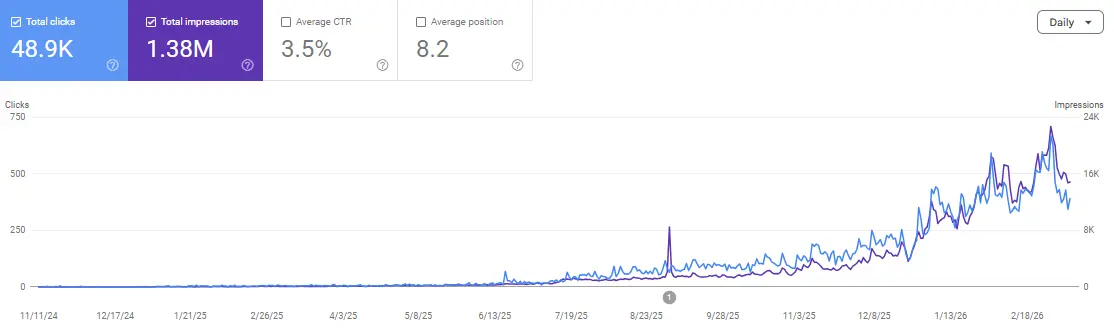

The saturation of the digital landscape has led to a significant “Trust Deficit” among journalists and high-tier editors. Generic expert commentary and rehashed industry insights no longer possess the “Information Gain” required to earn a backlink from a Tier-1 publication. From an informatics perspective, we must view link building not as a marketing task, but as a problem of Data Synthesis and Information Retrieval. By shifting the focus from creative storytelling to empirical evidence, we can build assets that search engines and journalists find mathematically impossible to ignore.

In our technical circles, we focus heavily on the underlying architecture that makes these data assets viable. If you are looking for a peer group that values rigorous data pipelines over generic outreach templates, you might find our community discussions quite useful. You can join us and other technical SEOs right here.

The Entropy of Information in Modern SEO

In information theory, entropy is a measure of uncertainty or randomness. In the context of SEO content, high entropy—meaning generic, unpredictable, or low-value content—fails to rank because it provides zero utility. To achieve high-authority editorial success, we must produce content with low entropy but high “Surprisal.” This is achieved through original data research.

When you master the art of turning raw datasets into high-authority editorial backlinks, you are essentially reducing the entropy for the journalist. You are providing a “ground truth” that saves them hours of investigative work. This creates a symbiotic relationship where the backlink is the natural byproduct of the value you’ve provided. The editorial backlink is no longer a favor; it is a citation of a primary data source.

Architecting the Data Collection Pipeline

A successful data-driven campaign begins with a resilient architecture. You cannot rely on manual data entry or small sample sizes if you want to target DR 80+ domains. You need a pipeline that can handle extraction, transformation, and loading (ETL) at scale.

1. Data Source Selection: Government vs. Private vs. Scraped

The source of your data determines the “irrefutability” of your campaign. Government APIs (like the Census Bureau or World Bank) offer high trust but are often explored by others. Private datasets (your own company’s anonymized data) are unique but may suffer from “Selection Bias.” Scraped data from specialized forums or e-commerce platforms provides the most “Surprisal” but requires the most technical cleaning.

2. Handling Asynchronous Extraction

If you are scraping data for your campaign, using synchronous libraries like Requests in Python will lead to bottlenecks. To build a dataset of millions of points, you should utilize Asyncio or frameworks like Scrapy. These allow for non-blocking I/O operations, meaning your scraper doesn’t sit idle while waiting for a server response. This efficiency is critical when you need to refresh your data points weekly to maintain a “Live” dashboard.

3. Rate Limiting and Circuit Breakers

To avoid being flagged as a malicious actor, your pipeline must implement sophisticated rate limiting. Using a “Token Bucket” algorithm or a “Fixed Window” counter ensures you stay within the target server’s constraints. Furthermore, implementing “Circuit Breakers” allows your system to automatically stop requests if it detects a high failure rate (e.g., 503 Service Unavailable), preventing your IP from being blacklisted.

Ensuring Statistical Integrity: Moving Beyond Averages

One of the most common failures in SEO data campaigns is the reliance on simple averages. Journalists at major tech publications are often data-literate; they will spot a skewed mean from a mile away. To build a truly authoritative asset, you must apply rigorous statistical methods.

-

Normal Distribution Check: Does your data follow a Gaussian curve? If it’s skewed, using the “Median” may suggest a more accurate “Typical” value than the “Mean.”

-

Z-Score Analysis for Outliers: Before publishing your findings, you must identify and justify the removal of outliers. A Z-score greater than 3 or less than -3 often indicates an anomaly that could invalidate your report’s integrity.

-

P-Value and Significance: If you are claiming a correlation (e.g., between page speed and conversion rate), you should ideally provide a P-value to show that the result is statistically significant and not a result of random chance.

If you are currently struggling with data normalization or finding it difficult to clean messy datasets for your link building assets, we have shared several Python scripts for automated data cleaning within our community. You can find these resources and discuss your specific technical hurdles in our Discord.

The Transformation: From CSV to Editorial Hook

Raw data is a liability; analyzed data is an asset. The goal is to move from a .csv file to a compelling “Information Gain” narrative. This is the core principle behind how to build backlinks with original data research.

1. The “Delta” Strategy

Find the change. Data journalists love “Delta”—how things have changed over time. If you have a dataset of 10,000 electronics prices, don’t just report the average price. Report the rate of inflation for specific categories compared to the previous quarter. The “Delta” is where the headline lives.

2. The “Anomaly” Strategy

Sometimes the most linkable part of your data is the thing that shouldn’t be there. If your analysis shows that a low-population city has a disproportionately high number of EV charging stations, that’s a story. Use your data mining skills to find these anomalies. This is exactly how to use data mining for irrefutable digital PR campaigns effectively.

Visualization for Information Density

In 2026, static infographics are losing their effectiveness. High-authority editorial backlinks are now earned through “High-Density Data Visualizations.” We are talking about interactive components built with D3.js or Svelte.

The visualization should follow the “Shneiderman’s Mantra”: Overview first, zoom and filter, then details-on-demand.

-

Overview: A high-level chart showing the main trend.

-

Zoom and Filter: The ability for a journalist to look at data specific to their region (e.g., a “Chicago” filter).

-

Details-on-Demand: Hovering over a data point reveals the raw numbers and specific metadata.

By hosting these visualizations on a sub-path of your domain (e.g., /data-labs/), you create a high-value “Citation Target.” When a journalist uses your chart in their article, they are naturally inclined to link to the interactive version so their readers can explore the data themselves.

The Technical Pitch: Engaging Data Journalists

When you outreach with a data-driven asset, your pitch shouldn’t look like a standard PR email. It should look like a “Research Abstract.”

-

Subject Line: Should lead with a hard statistic (e.g., “Report: 42% Increase in [X] Since [Y]”).

-

The Methodology: Briefly describe your data source and the N (sample size).

-

The Technical Appendix: Offer to provide the raw data or a methodology document if they wish to verify your findings.

This transparency builds an immediate “Trust Bridge.” You aren’t asking for a link; you are presenting a discovery. This approach moves your brand from a “Solicitor” to a “Collaborator.”

FAQs

What is the ideal sample size (N) for a data-driven SEO campaign?

The probability of a campaign succeeding increases with the sample size, but the “Law of Large Numbers” suggests diminishing returns after a certain point. For a national-level consumer report, an N of 1,000–2,000 is usually the minimum for statistical relevance. For technical data (e.g., analyzing 1 million URLs), the higher the N, the more irrefutable the campaign becomes.

How do I handle missing data points in my dataset?

You have two primary technical options: “Deletion” or “Imputation.” Deletion (removing the row) is safer but reduces your sample size. Imputation (using the mean or median to fill the gap) appears to be more “complete” but can introduce bias if not handled with care. Always disclose your handling of missing data in your methodology section.

Which visualization library is best for SEO linkable assets?

Chart.js is excellent for simple, responsive charts. However, if you need complex, custom interactions, D3.js is the gold standard. For those using React, Recharts or Nivo offer a more declarative way to manage data visualizations within your existing component structure.

How do I prevent competitors from stealing my data without linking?

While you cannot stop a “scraper” from taking your raw numbers, you can watermark your visualizations and include a clear “Citation Policy” on your page. More importantly, by being the first to publish and the most authoritative source, the “Knowledge Graph” will likely associate the data with your entity, even if a few links are missed.

Can I automate the outreach for data-driven campaigns?

The outreach phase appears to benefit significantly from a semi-automated approach. You can use tools to find “Data Journalists” or “Investigative Reporters” who have covered similar topics, but the final pitch should be personalized to their specific beat. Generic automation in Digital PR often leads to a “Spam” classification.

What is a “Static Fallback” and why do I need it for SEO?

If your interactive chart is built entirely in JavaScript (client-side), some search engine crawlers might fail to see the content. A “Static Fallback”—usually an SVG or a high-res PNG ensures that the “Information Gain” is indexed and that the page provides value even if JavaScript is disabled.

Conclusion

Data-driven link building is the final frontier of technical SEO. It requires a rare combination of data engineering, statistical analysis, and editorial intuition. By treating your linkable assets as software products built with a rigorous pipeline and validated through statistical integrity—you create a moat around your brand’s authority.

The links earned through this framework are the most resilient in the industry. They don’t just increase your Domain Rating; they solidify your brand’s position in the Knowledge Graph as a trusted node of information.

If you’re tired of the “Guest Posting” grind and want to start building assets that earn links while you sleep, come and join our technical discussions. We help each other bridge the gap between informatics and high-impact SEO.

Join the discussion and scale your authority: Scale Xpert Discord Community