The landscape of Large Language Model (LLM) integration has shifted from simple curiosity to a fundamental requirement for modern software architecture. For developers in 2026, the primary challenge is no longer just “making the AI work,” but rather managing the financial and technical efficiency of those integrations. Claude’s pay‑as‑you‑go API emerges as a critical tool in this environment, offering a utility-based billing model that aligns perfectly with the unpredictable nature of scaling digital products. Unlike seat-based licenses that impose a flat tax regardless of activity, the pay-as-you-go model treats compute as a raw resource much like AWS treats S3 storage or Lambda execution time.

In our technical development cycles, we often emphasize that the architecture of an application should dictate its billing, not the other way around. If you’re looking for a peer group of engineers who are actively optimizing API calls and debating the informatics of token efficiency, you might find our community discussions quite useful.

What Exactly Is the Claude Pay‑as‑You‑Go API?

The Claude pay‑as‑you‑go API provides a programmatic interface (typically via REST or specialized SDKs) to Anthropic’s suite of models, including the Sonnet and Opus variants, along with more specialized reasoning engines available in 2026. From an informatics perspective, this is a stateless request-response system where you are billed for the exact number of tokens processed in each transaction.

A “token” in 2026 is still the fundamental unit of measurement, representing approximately 0.75 of a word in English. However, the complexity of these tokens has increased as models now handle multimodal inputs images, PDFs, and even short video frames with specific “compute unit” equivalents. The pay-as-you-go model allows a developer to spin up a project with zero upfront capital investment. You aren’t buying a “seat” for a user; you are buying the ability to execute a function. This is essential for backend automation where a human never interacts with the model directly, such as nightly database cleaning or automated sentiment analysis on millions of log entries.

The Evolution of Token-Based Billing in 2026

In 2026, billing isn’t just about input and output. We now see a more granular breakdown of costs based on the “state” of the request. Anthropic has introduced specialized tiers for different types of compute. For instance, “Pre-fill” or “Prompt Caching” allows developers to store large context blocks (like an entire technical manual or a codebase) on the API side. Subsequent calls that reference this cached data are billed at a significantly lower rate than a fresh input.

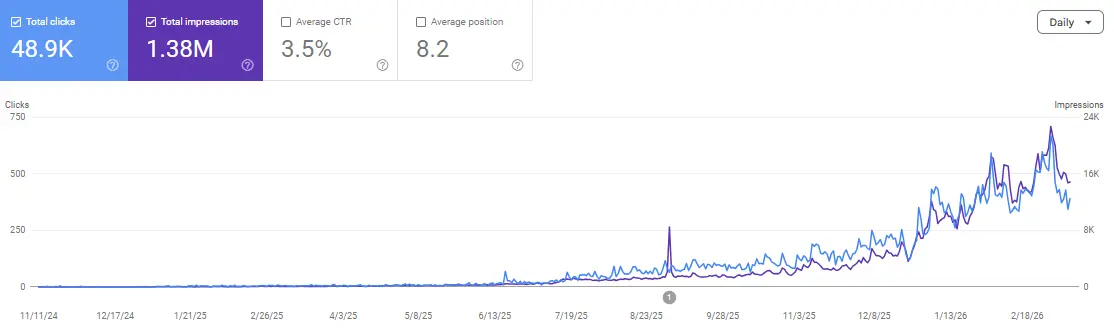

This is a game-changer for anyone looking at how SEO AI tools can transform your website growth. By caching your site’s schema and content structure within the API’s memory, you can run thousands of iterative audits at a fraction of the cost of re-sending the data every time. This financial informatics approach is what separates a profitable AI product from a money-burning experiment.

Key Features Developers Care About

When architecting a system around Claude’s API, the “Pay-as-You-Go” nature is only part of the equation. Developers must consider the following technical pillars:

1. Model Heterogeneity

The 2026 API allows for seamless switching between models based on the complexity of the task. You might use a “Light” model for simple entity extraction or metadata tagging tasks that require low latency and low cost while reserving the “Opus” tier for high-order reasoning or complex code generation. This ability to route traffic dynamically ensures that you aren’t overpaying for “intelligence” that isn’t required for a specific sub-routine.

2. Context Window Management

With context windows now reaching into the millions of tokens, the “Pay-as-You-Go” model requires disciplined engineering. Sending a 1-million-token prompt might cost $15–$30 in a single call. Developers must implement “Token Pruning” or “Recursive Summarization” to ensure that only the most relevant vectors are sent to the model. This is where the engineering mindset is vital: you aren’t just writing prompts; you are managing memory and bandwidth.

3. Rate Limits and Burst Handling

Pay-as-you-go tiers often come with dynamic rate limits. In 2026, these are managed through “Token Buckets.” If your application experiences a sudden surge in traffic—perhaps a social media post goes viral the API will allow you to “burst” above your normal limit for a short period, provided you have the billing credits to support it. This elasticity is far superior to seat-based plans where you would hit a hard ceiling based on your license count.

4. SDKs and Streaming Protocols

Modern SDKs for Python, TypeScript, and Rust have made integration nearly trivial. However, for production apps, the use of Server-Sent Events (SSE) for streaming is a standard requirement. Streaming allows the UI to display parts of the answer as they are generated, which reduces “Perceived Latency” for the user, even if the total execution time remains the same.

If you are currently struggling with integrating these streaming protocols or finding it hard to optimize your token usage within a complex Next.js or Nuxt app, we have shared several boilerplate examples and cost-calculator scripts within our community. You can find those resources and ask questions in our Discord.

Cost Dynamics and Practical Optimization Tips

In a pay-as-you-go environment, a poorly optimized prompt is a literal financial drain. To maintain a healthy margin on your AI products, you must treat prompt engineering as a cost-reduction strategy.

-

Implement Prompt Caching: If 80% of your prompt remains the same across different requests (e.g., system instructions or brand guidelines), ensure you are utilizing Anthropic’s caching features. This can reduce input costs by up to 90% for repeated structures.

-

Use Logit Bias and Max Tokens: Explicitly limit the output length. If you only need a “Yes/No” answer, set

max_tokensto 1 or 2. This prevents the model from “hallucinating” a long explanation that you end up paying for. -

Chain of Thought (CoT) Pruning: While “Thinking Step-by-Step” improves accuracy, it also increases output tokens. For production-ready tasks where the logic is already validated, you can often remove the CoT requirement to save on costs.

This strategic management of AI resources is exactly how artificial intelligence in SEO can boost your traffic fast. By automating the high-volume, low-complexity tasks with cheaper models, you free up your budget for the high-impact strategies that move the needle.

When Pay‑as‑You‑Go Is the Right Choice

Choosing between a seat-based “Pro” plan and a pay-as-you-go API plan depends entirely on your usage patterns.

For Prototyping and Startups

When you are in the “Discover” phase of a product, your usage is inherently “spiky.” You might spend three days coding without making a single API call, followed by a four-hour window of intense testing where you make 5,000 calls. Pay-as-you-go ensures you aren’t paying for the “idle” time. It is the most capital-efficient way to validate a Minimum Viable Product (MVP).

For Variable Teams and Consultants

If you manage multiple clients, a pay-as-you-go model allows you to generate specific API keys for each project. This makes it simple to bill back the exact costs to the client without guessing how much of your “Flat Monthly Fee” they consumed. It provides a level of transparency that is impossible with seat-based licensing.

For Backend Automation

Many of the most valuable AI use cases don’t have a UI. If you are running an ETL (Extract, Transform, Load) pipeline that enriches database entries with AI-generated tags, you don’t need a “chat interface.” You need a reliable endpoint. Pay-as-you-go is the only logical choice for these “headless” applications.

Developer Workflows and Real-World Integration Examples

To truly understand the value of the Claude API, let’s look at how it integrates into a modern developer’s workflow.

Example A: Automated Technical Support

Instead of hiring a 24/7 support team, a developer builds an ingestion pipeline. When a ticket arrives, a “Light” model extracts the intent and severity. If the ticket is a “Critical Bug,” it is routed to an “Opus” model to draft a potential code fix based on the repo’s documentation. The cost is calculated per ticket—meaning if you have a quiet month, your support costs drop to near zero.

Example B: Content Intelligence for SEO

A content team uses the API to analyze search intent for thousands of keywords. By calling the API programmatically, they can process a list of 10,000 keywords in minutes. This data is then used to follow the ultimate guide to rank in AI answers fast. The pay-as-you-go model allows them to run this massive audit once a month without maintaining expensive year-round licenses.

Risks, Governance, and Cost Controls

With great flexibility comes the risk of “Runaway Bills.” In 2026, a recursive loop in your code where an AI output triggers another AI input—can consume thousands of dollars in a matter of minutes if you haven’t implemented safety valves.

1. Hard Quotas and Alerts

Every developer should set a “Hard Monthly Cap” in the Anthropic dashboard. Once this limit is reached, the API will return a 429 error, stopping any further spend. Soft alerts at 50% and 75% of your budget are also essential for monitoring unexpected spikes in usage.

2. Data Privacy and Compliance

When using the pay-as-you-go API, your data is processed on Anthropic’s servers. In 2026, most enterprise-grade API tiers guarantee that your data is not used for training their base models. However, if you are handling PII (Personally Identifiable Information) or sensitive medical data, you must ensure your implementation follows SOC2 or HIPAA compliance protocols.

3. Rate Limit Handling (The Leaky Bucket)

Your application must be designed to handle “Throttling.” Implementing an “Exponential Backoff” strategy ensures that if the API tells you to slow down, your app waits a few milliseconds before retrying, rather than hammering the server and getting blocked.

FAQs

How is pricing typically structured in 2026?

Pricing is usually bifurcated into “Input Tokens” and “Output Tokens.” Output tokens are typically 3–5 times more expensive because they require more compute to generate. Some advanced models also charge a small fee for “System Prompt” overhead or multimodal processing.

Is there a free tier for developers?

Anthropic often provides a “Free Evaluation Tier” or $5–$10 in initial credits for new developer accounts. This allows you to test the latency and output quality before committing any funds to the account.

Can I reserve capacity for high-volume apps?

Yes. For applications that require guaranteed throughput (e.g., a real-time translator), Anthropic offers “Provisioned Throughput.” This is a hybrid model where you pay for a “Base” amount of capacity and then pay-as-you-go for any overflow traffic.

Are webhooks available for long-running jobs?

While most Claude API calls are synchronous, many developers use a “Worker” pattern. You send the request, and your backend listens for the streamed response. Some specialized 2026 endpoints also support asynchronous “Batch” processing, where you upload a file and get a webhook notification when the entire batch is finished, often at a 50% discount.

Can I fine-tune models on a pay-as-you-go basis?

In 2026, fine-tuning is increasingly available for the “Sonnet” class models. You typically pay a one-time “Training Fee” and then a slightly higher “Per-Token” fee to call your custom-weighted model.

What is the best way to monitor spend across multiple projects?

The best practice is to use “API Key Tagging.” By assigning a unique tag to each API key, you can filter your usage dashboard by project, client, or environment (Dev vs. Prod).

Conclusion

The Claude pay‑as‑you‑go API is more than just a billing option; it is a strategic advantage for the modern developer. It provides the “Intelligence Infrastructure” needed to build complex, scalable, and profitable applications without the burden of heavy upfront licensing. Whether you are a solo developer building a niche tool or a lead architect at a scaling startup, the ability to pay for only what you consume is the most logical path forward in 2026.

By focusing on token efficiency, caching, and smart model routing, you can build products that are not only technically superior but also financially sustainable. As search engines and user interfaces become more AI-centric, having a direct line to Anthropic’s reasoning engines will be the foundation of your competitive edge.

If you’re ready to start building your first integration and want to bounce your architecture or prompt strategy off other technical engineers, come and join us. We help each other bridge the gap between informatics and high-impact software.

Join the discussion and scale your authority: Scale Xpert Discord Community