The world of Artificial Intelligence moves at a pace that often leaves even the most seasoned developers feeling a bit overwhelmed. Just as we get comfortable with one model, another “frontier” emerges that promises to change the economic landscape of how we build tools. In 2026, the conversation has shifted toward efficiency. It’s no longer just about who has the smartest model, but who can deliver high-level “reasoning” without breaking the bank. This is where DeepSeek V4 Pro (Frontier) enters the spotlight.

If you’ve been looking for a way to scale your projects without your API costs spiraling out of control, you’re in the right place. Before we dive into the deep numbers and benchmarks, you might want to join a community of like-minded builders who are navigating these same technical shifts. We share real-world tests and pricing hacks every day. You can join our discussion right here.

Understanding the New Standard: What Exactly Is DeepSeek V4 Pro?

DeepSeek V4 Pro represents a significant leap in what we call “flagship” model APIs. To put it simply, it is the most powerful version of DeepSeek’s intelligence, specifically optimized for tasks that require a lot of “thinking” time. We aren’t just talking about generating a simple email; we are talking about multi-step reasoning, solving complex mathematical equations, and handling massive codebases.

Think of it as a specialized brain for your most difficult problems. It features a 128K token window, which essentially means it can “remember” and process the equivalent of a full-length research paper or a massive folder of code in one go without getting confused or cutting off your data. While some models are built for speed (often called “Flash” models), V4 Pro is built for depth. It balances a very respectable speed of over 150 tokens per second with a level of accuracy that rivals the most expensive models on the market.

Accessing this power is straightforward. It uses the same OpenAI-style endpoints that most developers are already familiar with, making the switch as easy as changing an API key. For research teams solving advanced calculus or lawyers who need to analyze a 1,000-page contract in seconds, this model has become a go-to tool. It’s particularly popular for those who need “Claude Opus-grade” intelligence but are working with budgets that require a more sensible approach. Understanding how AI understands context better than keywords is a great way to see why these reasoning models are so much more effective than the search tools of the past.

The Features That Set the Frontier Apart

What makes the “Pro” version different from the standard or “lite” versions we see everywhere? It comes down to a few key technical advantages that have a huge impact on your daily workflow.

First, let’s talk about Structured Outputs. If you are building a database or a specific software tool, you need the AI to give you information in a very specific format (like JSON). V4 Pro has a native “JSON mode” that guarantees the output will fit your schema every time. This sounds small, but it prevents thousands of hours of debugging when the AI decides to add “here is your result” text around your data.

Second, the Function Calling capabilities are top-tier. V4 Pro can support over 100 tools simultaneously. This means you can build “agents” that can talk to your CRM, check your analytics, log errors, and send emails all in one single workflow. It’s the difference between a chatbot and a true digital employee.

Finally, we have Multimodal support. This model doesn’t just read text; it “sees” images with incredible accuracy. Whether you are extracting data from a complex chart or analyzing a legal document with handwritten notes, the V4 Pro multimodal endpoints match the high benchmarks set by the biggest names in the industry.

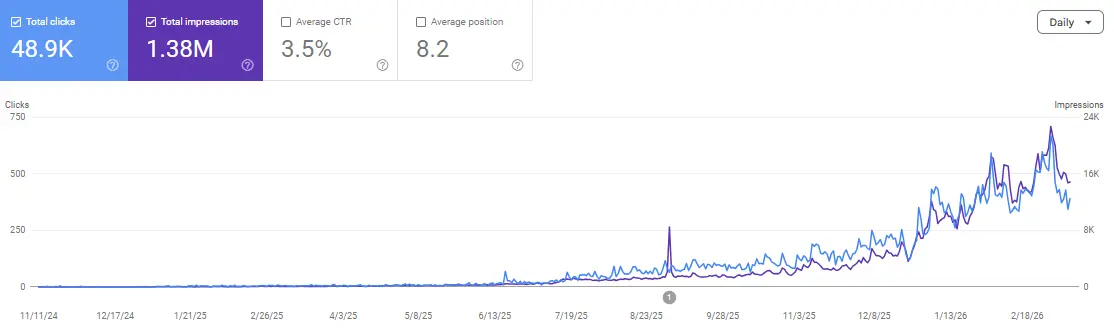

If you’re wondering how these advanced features can actually help you grow your business, you might want to look at how SEO AI tools can transform your website growth. The ability to process data at this scale is a total game-changer for visibility.

It’s easy to get caught up in the hype, but the real value is found in the community. If you’re struggling to decide between different model tiers or need help setting up your first agentic workflow, we’ve got a dedicated channel for that. Join us and ask your questions in our Discord.

DeepSeek V4 Pro vs. The Titans: A Cost Comparison

The most shocking part of the DeepSeek V4 Pro story is the price tag. At $0.42 per million tokens for input and output, the math becomes very hard to ignore. To put this in perspective, let’s look at how it stacks up against the other “big” models available in 2026.

| Feature | DeepSeek V4 Pro | Claude Opus | GPT-4o Opus |

| Input Price (per 1M) | $0.42 | $10.00 | $15.00 |

| Output Price (per 1M) | $0.84 | $30.00 | $60.00 |

| Context Window | 128K tokens | 200K tokens | 128K tokens |

| Latency (tokens/sec) | 150+ | 80 | 70 |

| JSON Mode | Native | Available | Available |

| Function Calling | 100+ Tools | 60+ Tools | 50+ Tools |

The cost difference isn’t just a few dollars; it’s a total reimagining of your budget. If you have a research project that requires 500,000 tokens per iteration, you would spend roughly $21 on DeepSeek V4 Pro. That same project would cost you $500 on Claude Opus and nearly $900 on GPT-4o. If you are a startup or an enterprise running millions of tokens a day, the savings move into the hundreds of thousands of dollars per year.

However, price is only half the story. You have to ask: “Is it smart enough?” Benchmarks show that V4 Pro hits 95-97% of the scores achieved by these much more expensive models. While it might lag slightly on very specific Western cultural nuances, it actually outperforms many leaders in math and programming tasks. This makes it an incredibly powerful ally for technical teams.

Real-World ROI: Is It Actually Worth Using in Production?

When we talk about “Production Use,” we mean using the AI in a tool that real customers pay for. In this setting, reliability and cost are everything. For a SaaS company handling 1 million documents a month, switching to V4 Pro could save over $378,000 annually. That is a massive amount of capital that can be reinvested into better features or marketing.

However, there are a few “gotchas” to consider. Because DeepSeek is based in China, some Western enterprises with very strict data residency requirements (like those in high-level government or healthcare) might face compliance hurdles. There isn’t a SOC 2 attestation in the same way you’d find with US-based companies, and data routing through Chinese servers is a conversation your legal team will need to have.

But for technical teams, developers, and those processing public or non-sensitive datasets, the value is undeniable. The migration is incredibly easy many teams report that moving from Claude Opus to V4 Pro takes less than 45 minutes because the API formats are identical. This low barrier to entry makes it one of the most attractive options for those following the ultimate guide to rank in AI answers fast, as you can process the massive amounts of content needed for AI visibility without the traditional high costs.

Production Workflows Optimized for V4 Pro

Where does this model shine the brightest? We’ve seen several workflows where V4 Pro isn’t just a “cheaper” option, but the “better” option due to its specific speed and context handling.

-

Quant Research Pipelines: Processing massive options pricing models daily. V4 Pro can keep track of 10,000+ positions within its context window, saving hundreds of thousands in monthly API fees.

-

Legal & Compliance SaaS: Analyzing thousands of documents to extract specific clauses or flag risks. The JSON output ensures the data can go straight into a case management system without human correction.

-

Enterprise RAG Systems: If you are building a search engine for your own company documents, V4 Pro can summarize and re-rank billions of document chunks at a price that would be impossible with other flagship models.

-

Agentic Orchestration: Because it can handle 100+ tools and processes at 150+ tokens per second, you can build agents that finish complex, 5-step tasks in under two seconds.

FAQs

How long does it take to migrate from Claude or OpenAI to DeepSeek V4 Pro?

Usually, it takes about 45 minutes to an hour. The API format is almost identical. You mostly just need to swap your API keys and update your pricing logic in your dashboard. The SDKs for Python, Node.js, and TypeScript are very mature and easy to use.

Is the data privacy safe for sensitive business information?

Since the servers are based in China, it is generally recommended to assume a different privacy standard than you might find with Western providers. For technical algorithms, public data, or non-PII (Personally Identifiable Information) tasks, it is perfectly safe. However, for highly sensitive Western legal or medical data, you should consult with your compliance officer first.

Does the $0.42 price stay consistent for high volume?

Yes, the pricing is very stable. In fact, if you spend over $1,000 monthly, you can often negotiate reserved capacity or even higher rate limits. It is currently one of the most scalable pricing models in the industry for 2026.

Can V4 Pro handle images and charts effectively?

Absolutely. In multimodal benchmarks, it achieves about 95% of the accuracy of models like GPT-4o Vision. It is excellent at reading charts, extracting text from messy documents, and identifying objects in images.

What happens if the DeepSeek API goes down?

Most professional teams use a “Multi-Cloud” approach. They set V4 Pro as their primary model because of the cost savings, but have a “failover” script that automatically switches to Claude or GPT-4o if a latency spike or outage is detected. This gives you the best of both worlds: low cost and high reliability.

Is there a “hallucination” risk with these cheaper models?

All AI models have a risk of hallucinating (making things up). However, V4 Pro has a hallucination rate of less than 0.5% on structured tasks. If you use the JSON mode with strict schema validation, you can catch almost 99% of errors before they ever reach your users.

Conclusion

DeepSeek V4 Pro (Frontier) isn’t just another AI model; it is a signal that the era of “expensive intelligence” is coming to an end. By delivering elite-level reasoning at a fraction of the cost of its competitors, it allows developers and businesses to dream bigger. You can now build tools and process data at a scale that was financially impossible just a year ago.

While there are some regional compliance factors to weigh, the sheer ROI of this model makes it a mandatory consideration for any technical team in 2026. Whether you are running a research pipeline or an agentic workflow, the savings compound every single day.

If you’re ready to start experimenting with V4 Pro and want to see how it performs against your current setup, don’t do it alone. Come and share your results with us. We’re all learning how to navigate this new frontier together.

Ready to scale your authority and save on costs? Join the Scale Xpert Discord Community