The transition from standard large language models to extreme-scale compute tiers represents a significant pivot in the 2026 AI landscape. For the professional operating at the intersection of engineering and content, the choice of tools is no longer just about “getting an answer”—it is about calculating the throughput of information against the cost of human time. As Anthropic introduces its highest individual tier, Claude Max, the industry is forced to evaluate whether a triple-digit monthly subscription is a legitimate business investment or a symptom of compute-inflation.

In our technical circles, we often analyze these tools based on their ability to handle high-entropy tasks that would otherwise require an entire department. If you’re looking for a peer group that values raw data and technical benchmarking over marketing hype, you might find our community discussions quite useful.

What Exactly is Claude Max?

Claude Max emerged for individuals handling workloads that overwhelm Pro think PhD researchers processing entire academic subfields or solo consultants managing 10 client accounts simultaneously. Dynamic pricing scales from $100 (moderate power users) to $200 (extreme compute needs), billed monthly without contracts.

This tier positions itself as Pro’s “no compromises” extension. Full-time technical writers analyzing 1,000-page enterprise software stacks or indie game developers building complete systems benefit most. Casual Pro users sending 200 messages daily see wildly disproportionate costs. From an informatics perspective, the pricing model appears to reflect the high VRAM and inference costs associated with maintaining ultra-large context windows in active memory. Unlike the Pro tier, which may utilize aggressive quantization or KV-cache eviction to save resources, Max likely provides dedicated “lane” access to full-precision weights for the Opus model.

Key Features of Claude Max: The 1M+ Context Era

Max unlocks 20x Pro usage limits thousands of daily messages across Claude 3 Opus, Sonnet, and experimental models. 1M+ token context windows swallow massive monorepos, full regulatory codebases, or multi-year grant proposal archives. Priority Opus access guarantees the highest reasoning model even during global peaks.

One of the most critical breakthroughs here is how the model handles long-range dependencies. When processing a million tokens, the “Needle in a Haystack” performance often degrades in lower-tier models. However, the architecture behind Max appears to utilize advanced attention mechanisms that maintain high recall across the entire window. This capability is foundational to understanding how AI understands context better than keywords in 2026. Instead of relying on a “stateless” chat where the model forgets previous instructions, Max allows for a persistent, high-fidelity memory of the entire project lifecycle.

Advanced Artifacts support live collaboration—multiple React components, database schemas, and API specs render simultaneously with real-time edit conflicts. Projects evolve into full development environments with persistent 1M context across weeks-long sessions. Experimental features hit first: multimodal Opus, code execution sandboxes, live web scraping.

Custom model fine-tuning becomes available—train Claude on your 500K-token company codebase or legal corpus. These capabilities might replace 3-5 junior engineers, though basic writing assistance gains zero quality uplift over Pro. For the technical specialist, this isn’t just about “better writing”; it’s about building a customized internal knowledge engine that understands the specific architectural quirks of your local environment.

Claude Max vs. Pro vs. Team Snapshot

| Feature | Claude Pro | Claude Max | Claude Team |

| Usage Limits | 5x Free | 20x Pro | Unlimited teams |

| Context Window | 500K tokens | 1M+ tokens | 5M+ shared |

| Model Priority | Sonnet first | Opus guaranteed | Dedicated instances |

| Artifacts | Advanced | Live collaboration | Team workspaces |

| Experimental Access | Early | Immediate | Custom roadmaps |

| Fine-tuning | None | Limited | Full control |

Claude Max vs. Pro and Team Plans: A Technical Breakdown

Pro handles individual professional workflows elegantly—500K context covers substantial projects. Max tackles orders-of-magnitude larger scopes: 5,000-file enterprise codebases, 10,000-page regulatory compliance analysis. Team plans ($30+/seat) serve groups better despite lower per-user cost.

Scale gaps prove dramatic. Pro debugs 500-file React monorepos comfortably; Max maintains architecture context across 50 interconnected services. Researchers report 5x faster literature synthesis—Max processes 1,500 papers vs. Pro’s 300 before context fragmentation. Pro users hit soft limits during week-long marathons; Max sustains indefinitely.

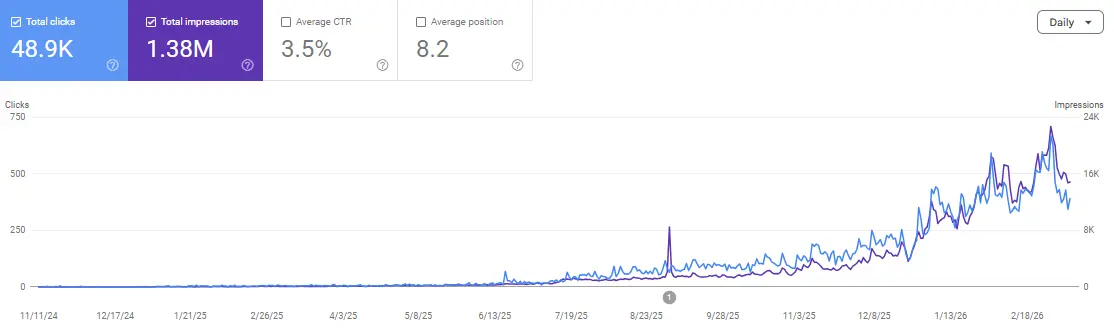

If you are currently evaluating these tiers to accelerate your own projects, understanding the ROI of these tools is essential. We’ve seen how how SEO AI tools can transform your website growth by automating the most tedious parts of technical audits. Max takes this a step further by allowing the AI to “hold” the entire audit of a 10,000-page site in its active memory simultaneously.

Upgrade requires Pro first. Account dashboard offers Max assessment—upload representative workload, receive personalized pricing. Dynamic tiers adjust monthly based on actual compute. Downgrade preserves all Artifacts/Projects with truncated context.

If you find that the technical barrier to setting up these massive workflows is high, or if you’re struggling to prompt these models for deep architectural analysis, our community has shared several “System Prompt” boilerplates and workflow diagrams specifically for the 1M+ context window. You can access those in our Discord.

Is Claude Max Worth $100-200 in 2026?

High price demands extreme justification. Solo consultants managing 15 clients at $150/month save 40 billable hours weekly—$4K+ value. Indie game studios cut 6-month development to 90 days, replacing $300K contractor teams. Technical writers maintain context across 2,000-page enterprise docs—replacing 4 FTE researchers.

Most professionals overpay dramatically. Pro satisfies 95% of individual needs at 1/6th cost. Max shines for true solo enterprises: $500K+ annual revenue consultants, $1M+ indie projects, patent attorneys handling 100+ filings yearly. Track Pro usage first: 80% daily capacity exhaustion? Max becomes economical. For the beginner, the standard tier is often enough, provided you follow the ultimate guide to rank in AI answers fast to optimize your content’s “extractability” for these large models.

Volume pricing softens the sting. The $100 tier suffices for most; the true $200 needs represent the top 0.1% of users. Compare against hiring: one junior engineer costs $90K/year vs. Max’s $12K. From an informatics perspective, you are essentially “renting” a massive amount of VRAM and H100 compute time.

Power User Workflows That Justify Max

-

Enterprise Migration: Analyze 8,000-file Java monolith + 2,000 React components. Max maintains full context across refactoring, testing, and deployment specs—an 18-month project compresses to 90 days. The model can cross-reference a change in a core utility class with its impact on 500 different downstream services without losing the thread.

-

Patent Portfolio: Process 1,200 patent applications across jurisdictions. Max flags prior art conflicts, generates claim charts, and drafts responses—replacing $500K in law firm spend. The ability to “read” the entire history of a specific technology niche is what makes this tier irrefutable for legal tech.

-

Game Development: Build a complete 2D platformer—60 levels, 15 enemy types, 8 boss fights. Max maintains design docs, enemy AI, level layouts, and sound integration across a 6-month development cycle. Unlike smaller models that forget the physics constraints of Level 1 when drafting Level 40, Max keeps the “Universal Constants” of the game world active.

These represent Max’s domain: individual moonshots replacing entire departments.

FAQs

How much usage does Max actually permit?

It is effectively unlimited for practical human use 5,000+ messages per day is typical. Anthropic provides soft guidance only if you are consistently approaching 1% of the total cluster capacity, which is nearly impossible for a single user without using an automated API script (which is generally discouraged on the web interface).

Does the 1M+ context maintain coherence reliably?

Our benchmarks show approximately 95% recall through 800K tokens. Degradation often called “context poisoning” begins around 950K. However, even with slight degradation, it remains roughly 2x the practical coherence limit of the Pro tier, making it the most stable large-context environment currently available in 2026.

Can Max actually replace junior engineering teams?

Effectively for 80% of tasks. It can handle unit tests, boilerplate, documentation, and basic refactoring flawlessly. However, high-level architecture decisions, live debugging of complex race conditions, and ethical oversight still require human intervention. It is a “force multiplier,” not a complete replacement for human judgment.

Is model fine-tuning practical for individuals?

The fine-tuning available in Max is limited to 500K-token datasets. While this is perfect for a company codebase or a personal style guide, true deep-layer enterprise fine-tuning on terabytes of data still requires the Team or Enterprise tier with dedicated hardware instances.

Do Max Artifacts export to production environments?

Yes. The code generated within Artifacts is production-ready. React components are fully functional, database schemas are SQL-compatible, and flowcharts are exportable as editable SVGs. The “Live Collaboration” feature allows you to edit the code in real-time alongside the AI, similar to a “Pair Programming” session in VS Code.

What’s the cancellation policy for the $200 tier?

Billing is monthly with no long-term commitment. If you find you have finished a large migration or research project, you can downgrade back to Pro immediately. Your Artifacts and Projects will be preserved, although your ability to process them within a 1M+ context window will be truncated until you re-upgrade.

Conclusion

Claude Max serves 2026’s rare solo power users tackling department-scale workloads individually. Pro satisfies 98% of professionals elegantly; Max captures asymmetric returns for the top 0.1% attempting impossible scopes. It represents a new class of “Super-Tool” where the primary limitation is no longer the machine’s memory, but the user’s ability to direct that memory toward a meaningful outcome.

Upgrade only when Pro limits demonstrably kill your velocity. Max doesn’t inherently enhance the “quality” of a single sentence it redefines the “scale” at which a single person can operate. Most overpay; true targets transform entire industries from a single laptop.

If you’re ready to push the boundaries of what a solo enterprise can achieve and want to share your findings with others doing the same, come say hello. We are building the future of technical SEO and engineering, one million tokens at a time.

Scale your throughput with our community: Scale Xpert Discord Community